Artificial intelligence startup Landing AI raises $57 million in its Series A funding round led by industrial IoT investor McRock Capital. Other investors like Insight Partners, Taiwania Capital, Canada Pension Plan Investment Board (CPP Investments), Intel Capital, Samsung Catalyst Fund, Far Eastern Group’s DRIVE Catalyst, and Walsin Lihwa also participated in the funding round.

The founder of this AI startup is the co-founder of Google Brains research lab and former chief scientist at Baidu, Andrew Ng. With this fresh funding, Landing AI plans to further improve its product LandingLens, and extensively hire trained professionals to meet their goals. Landing AI plans to increase its team size from 75 to 150 while adding on more customers.

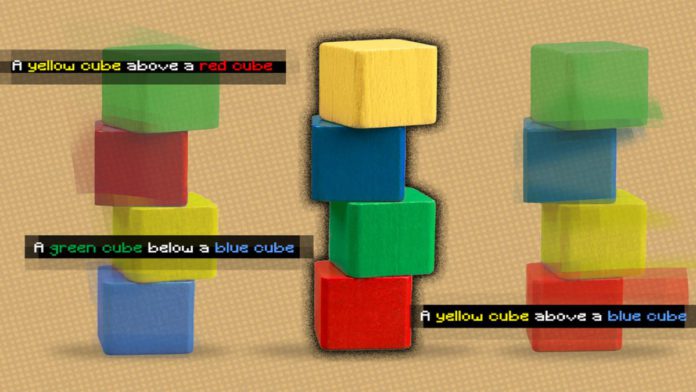

Andrew Ng said, “AI built for 50 million data points doesn’t work when you only have 50 data points. By bringing machine learning to everyone regardless of the size of their data set, the next era of AI will have a real-world impact on all industries.”

Read More: Novarad and CureMetrix to develop new AI-driven Mammography solutions

He further added that it is important to have good quality data in order to win with artificial intelligence. United States-based Landing AI was founded in 2017 and specializes in developing artificial intelligence solutions for challenging visual inspection problems and generating business value.

Additionally, Co-Founder and Managing Partner of McRock Capital, MacDonald, will join Landing AI’s board of directors. “Landing AI will unleash the power of the Industrial IoT one company, one factory, and one manufacturing line at a time,” said MacDonald. He also mentioned that he believes Landing AI will be able to bring a transformation in digital technologies for various markets.

George Mathew, managing director at Insight Partners, said that the need for Landing AI is ever increasing, and he believes the company will be able to unlock untapped segments of machine vision projects.”