Martin Kon, ex-YouTube CFO (Chief Financial Officer) since 2021, exits the company as he joins an artificial intelligence (AI) startup, Cohere, as the COO (Chief Operating Officer) and President.

Kon is an experienced business leader who has worked with globally recognized firms like the Olliver Wyman and Boston Consulting Group. He joined YouTube in 2019 and was responsible for strategy, finance, business operations, and commercial data analytics at YouTube and worked directly with Susan Wojcicki, YouTube’s CEO and Google’s Corporate CFO.

As Cohere’s COO, Kon will focus on understanding enterprise consumer needs and fetching relevant commercial products and solutions to the market. Aidan Gomez, Cohere’s CEO, added that Kon would also help businesses to realize the “enormous value from harnessing the power of language models.”

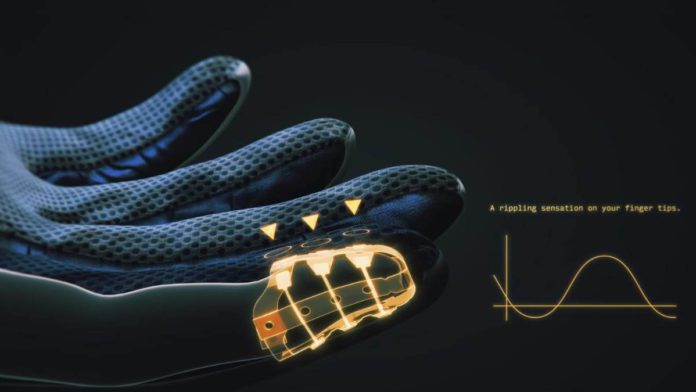

Read More: Researchers introduce wearable electronic skin for tactile feedback in AR and VR

Cohere is an AI startup based out of Toronto that focuses on helping businesses to adopt and take advantage of natural language processing (NLP) in the real world. The startup caters to predictive text generation, copywriting, conversational AI, summarization, or content moderation.

Gomez said, “With Martin’s network and experience, I look forward to ushering in Cohere’s next chapter, one where we establish ourselves as the commercial leader of language AI.”