The second-generation “Loihi” processor from Intel has been made available to advance research into neuromorphic computing approaches that more closely mimic the behavior of biological cognitive processes. Loihi 2 outperforms the previous chip version in terms of density, energy efficiency, and other factors. This is part of an effort to create semiconductors that are more like a biological brain, which might lead to significant improvements in computer performance and efficiency.

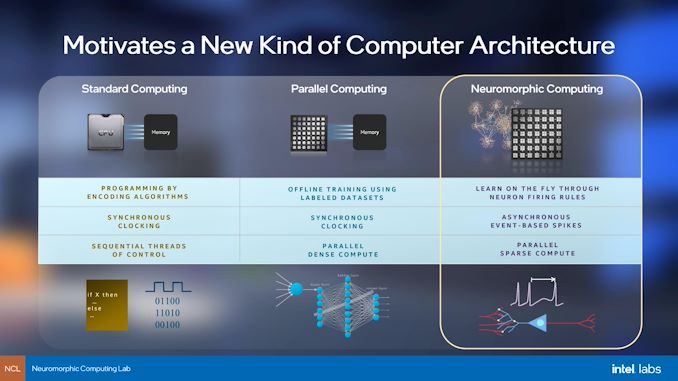

The first generation of artificial intelligence was built on the foundation of defining rules and emulating classical logic to arrive at rational conclusions within a narrowly defined problem domain. It was ideal for monitoring and optimizing operations. The second generation is dominated by the use of deep learning networks to examine the contents and data that were mostly concerned with sensing and perception. The third generation of AI focuses on drawing similarities to human cognitive processes, like interpretation and autonomous adaptation.

This is achieved by simulating neurons firing in the same way as humans’ nervous systems do, a method known as neuromorphic computing.

Neuromorphic computing is not a new concept. It was initially suggested in the 1980s by Carver Mead, who coined the phrase “neuromorphic engineering.” Carver had spent more than four decades building analytic systems that simulated human senses and processing mechanisms including sensation, seeing, hearing, and thinking. Neuromorphic computing is a subset of neuromorphic engineering that focuses on the human-like systems’ “thinking” and “processing” capabilities. Today, neuromorphic computing is gaining traction as the next milestone in artificial intelligence technology.

In 2017, Intel released the first-generation Loihi chip, a 14-nanometer chipset with a 60-millimeter die size. It has more than 2 billion transistors and three orchestration Lakemont cores. It also features 128 core packs and a configurable microcode engine for asynchronous spiking neural network-on-chip training. The benefit of having spiking neural networks enabled Loihi to be entirely asynchronous and event-driven, rather than being active and updating on a synchronized clock signal. When a charge builds up in the neurons, “spikes” are sent along active synapses. These spikes are mostly time-based, with time being recorded as part of the data. The core fires out its own spikes to its linked neurons when spikes accumulate in a neuron for a particular amount of time and reach a certain threshold.

Even though Loihi 2 has 128 neuromorphic cores, each core now has 8 times the number of neurons and synapses. Each of the 128 cores has 192 KB of flexible memory, compared to the prior limit of 24. Each neuron may now be assigned up to 4096 states depending on the model, compared to the previous limit of 24. The Neuron model can now be entirely programmable, similar to an FPGA, which gives it more versatility – allowing for new sorts of neuromorphic applications.

One of the drawbacks of Loihi was that spike signals were not programmable and had no context or range of values. Loihi 2 addresses all of these issues while also providing 2-10x (2X for neuron state updates, up to 10X for spike generation) faster circuits, eight times more neurons, and four times more link bandwidth for increased scalability.

Loihi 2 was created using the Intel 4 pre-production process and benefited from the usage of EUV technology in that node. The Intel 4 process allowed to halve the size of the chip from 60 mm2 to 31 mm2, with the number of transistors rising to 2.3 billion. In comparison to previous process technologies, the use of extreme ultraviolet (EUV) lithography in Intel 4 has simplified the layout design guidelines. This has allowed Loihi 2 to be developed quickly.

Support for three-factor learning rules has been added to the Loihi 2 architecture, as well as improved synaptic (internal interconnections) compression for quicker internal data transmission. Loihi 2 also features parallel off-chip connections (that enable the same types of compression as internal synapses) that may be utilized to extend an on-chip mesh network across many physical chips to create a very powerful neuromorphic computer system. Loihi 2 also features new approaches for continual and associative learning. Furthermore, the chip features 10GbE, GPIO, and SPI interfaces to make it easier to integrate Loihi 2 with traditional systems.

Loihi 2 further improves flexibility by integrating faster, standardized I/O interfaces that support Ethernet connections, vision sensors, and bigger mesh networks. These improvements are intended to improve the chip’s compatibility with robots and sensors, which have long been a part of Loihi’s use cases.

Another significant change is in the portion of the processor that assesses the condition of the neuron before deciding whether or not to transmit a spike. Earlier, users had to make such conclusions using a simple bit of arithmetic in the original processor. Now, they only need to conduct comparisons and regulate the flow of instructions in Loihi 2 thanks to a simpler programmable pipeline.

Read More: Apple Event 2021: Everything about the new A15 Bionic chip Explained!

Intel claims Loihi 2’s enhanced architecture allows it to be compatible in carrying back-propagation processes, which is a key component of many AI models. This may help in accelerating the commercialization of neuromorphic chips. Loihi 2 has also been proven to execute inference calculations, with up to 60 times fewer operations per inference compared to Loihi – without any loss in accuracy. Often inference calculations are used by AI models to interpret given data.

The Neuromorphic Research Cloud is presently offering two Loihi 2-based neuromorphic devices to researchers. These are:

- Oheo Gulch is a single-chip add-in card that comes with an Intel Arria 10 FPGA for interfacing with Loihi 2 which will be used for early assessment.

- Kapoho Point, an 8-chip system board that mounts eight Loihi 2 chips in a 4×4-inch form factor, will be available shortly. It will have GPIO pins along with “standard synchronous and asynchronous interfaces” that will allow it to be used with things like sensors and actuators for embedded robotics applications

These will be available via a cloud service to members of the Intel Neuromorphic Research Community (INRC) and Lava via GitHub for free.

Intel has also created Lava to address the requirement for software convergence, benchmarking, and cross-platform collaboration in the realm of neuromorphic computing. As an open, modular, and extendable framework, it will enable academics and application developers to build on one other’s efforts and eventually converge on a common set of tools, techniques, and libraries.

Lava operates on a range of conventional and neuromorphic processor architectures, allowing for cross-platform execution and compatibility with a variety of artificial intelligence, neuromorphic, and robotics frameworks. Users can get the Lava Software Framework for free on GitHub.

Edy Liongosari, chief research scientist and managing director for Accenture Labs believes that advances like the new Loihi-2 chip and the Lava API will be crucial to the future of neuromorphic computing. “Next-generation neuromorphic architecture will be crucial for Accenture Labs’ research on brain-inspired computer vision algorithms for intelligent edge computing that could power future extended-reality headsets or intelligent mobile robots,” says Edy.

For now, Loihi 2 has piqued the interest of the Queensland University of Technology. The institute is looking to work on more sophisticated neural modules to aid in the implementation of biologically inspired navigation and map formation algorithms. The first generation Loihi is already being used at Los Alamos National Lab to study tradeoffs between quantum and neuromorphic computing. It is also being used in the backpropagation algorithm, which is used to train neural networks.