Miltos Alamanis, a principal researcher at Microsoft Research, and Marc Brockschmidt, a senior principal research director at Microsoft Research, recently unveiled their newly built deep learning model, BugLab. According to the researchers, BugLab is a Python implementation of a new approach for self-supervised learning of both bug detection and repair. This newly developed model will help developers discover flaws in their code and troubleshoot their applications.

Finding bugs or algorithm flaws is crucial as it can enable developers to remove bias, improve the technology, and reduce the risk of AI-based discrimination against certain groups of people. As a result, Microsoft is developing AI bug detectors that are trained to look for and resolve flaws without using data from actual bugs. According to the journal, the need for “no training” was sparked by a scarcity of annotated real-world bugs to aid in the training of bug-finding deep learning models. While there is still a significant amount of source code available, most of it is not annotated.

BugLab’s current goal is to uncover difficult-to-detect flaws rather than critical bugs that can be quickly detected using traditional software analysis. Researchers assert that the deep learning model saves money by eliminating the time-consuming process of manually developing a model to discover faults.

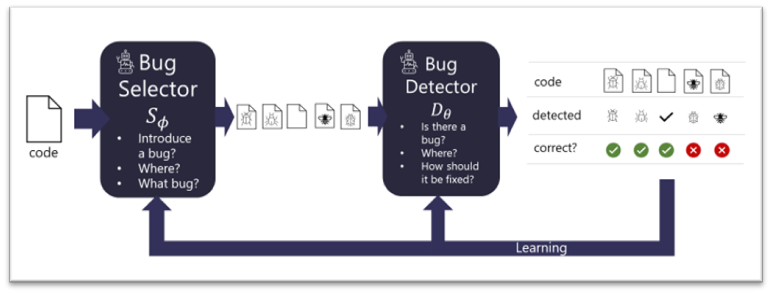

BugLab employs two competing models that learn by engaging in a “hide and seek” game based on generative adversarial networks (GAN). A bug selector model selects whether or not to introduce a bug, where to introduce it, and what form it should take (for example, replacing a certain “+” with a “-“). The code is changed to introduce the problem based on the selector option. The bug detector then tries to figure out if a flaw has been introduced in the code, and if so, where it is and how to repair it.

These two models are jointly trained on millions of code snippets without labeled data, i.e., in a self-supervised manner. The bug selector tries to “hide” interesting defects within each code snippet, while the detector seeks to outsmart the selector by detecting and repairing them.

The detector improves its ability to discover and correct defects as a result of this process, while the bug selector improves its ability to create progressively difficult training samples.

While GANs are conceptually similar to this training process, the Microsoft BugLab bug selector does not create a new code snippet from scratch but rather rewrites an existing one (assumed to be correct). Furthermore, code rewrites are – by definition – discontinuous, and gradients from the detector to the selector cannot be transmitted.

Apart from having knowledge about various coding languages, a programmer must also devote time to the arduous task of correcting errors that occur in various codes, some simple and others so difficult that they can go unnoticed even by large artificial intelligence models. BugLab will relieve programmers of this burden when dealing with these types of trivial errors, giving them more time to focus on the more complex bugs that an AI couldn’t detect.

Read More: Microsoft launches Tutel, an AI open-source MoE library for model training

To assess performance, Microsoft manually annotated a small dataset of 2374 real Python package errors from the Python Package Index with such bugs. The researchers observed that the models trained with its “hide-and-seek” strategy outperformed other models, such as detectors trained with randomly inserted flaws, by up to 30%.

The findings are encouraging, indicating that around 26% of defects may be detected and corrected automatically. However, the findings also revealed a high number of false-positive alarms. While several known flaws were uncovered, just 19 of BugHub’s 1,000 warnings were indeed true bugs. Eleven of the 19 zero-day faults discovered were reported to GitHub, six of which were merged, and five were still awaiting approval. According to Microsoft, their approach seems promising, although, further work is needed before such models can be used in practice. Furthermore, there is a possibility that this technology will be available for commercial usage at some point soon.

The findings have been published in paper titled Self-Supervised Bug Detection and Repair, which was presented at the 2021 Conference on Neural Information Processing Systems (NeurIPS 2021).