GPT-4 vision of OpenAI has revolutionized our engagement with AI systems by taking text and images as inputs. However, its closed-source and subscription model limits its use in scale. As a result, an open-source community is rising with the LLaVa 1.5 (Large Language and Vision Assistant) tool as an alternative for GPT-4 Vision.

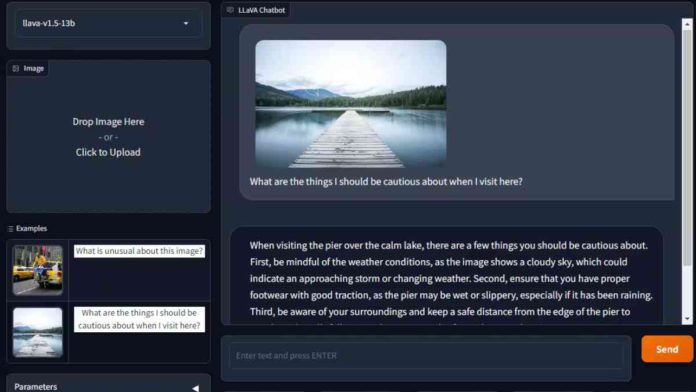

LLaVa 1.5 is an open-source Large Language Model (LLM) tool. It accepts text or images as input and provides surprisingly accurate answers in return. At its core, it is an AI model that combines a vision encoder and large language models for visual and language understanding.

As a visual encoder, the officials of LLaVa 1.5 have used the CLIP (Contrastive Language-Image Pre-training) model. This model was developed by OpenAI in 2021 for training on massive datasets of image-description pairs to learn the correlation between images and text. The other LLM model for LLaVa 1.5 is Vicuna, a modified version of Meta’s open-source LLAMA for instruction following.

Read more: Meta Launched AI Chatbots Embodied by Celebrities.

While LLaVa 1.5 is known to mimic GPT-4, it shows robust performance results. It shows impressive results for the open-source small model. However, it is important to note that it is trained on ChatGPT data, so developers are restricted from commercial usage by the terms.

With all its cutting-edge technology, LLaVa 1.5 is not yet ready to compete with GPT-4 Vision. It lacks many features like ease of use, external plugins, and integration with other OpenAI tools. That said, this is just the start of innovation for open-source generative AI tools. If the growth continues at this pace, we expect to see a revolution in generative AI technologies.