According to Canada’s Privacy Commissioner, the Royal Canadian Mounted Police (RCMP) used Clearview AI facial recognition technology, violating the country’s Privacy Act. The Privacy Commissioner has submitted a special report about the same to the Parliament.

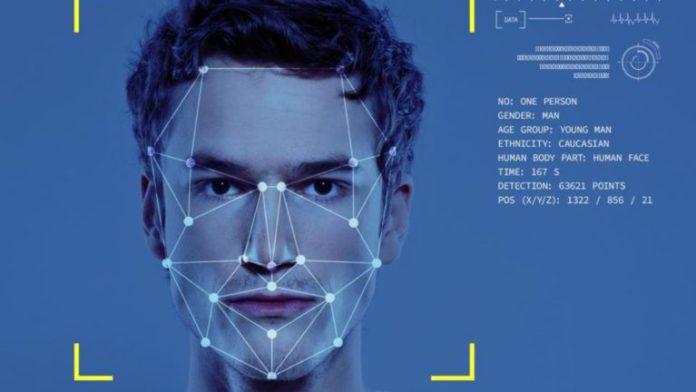

The Office of the Privacy Commissioner said that this AI technology allowed RCMP officials to compare Canadian citizen’s photographs with more than three billion images Clearview had acquired from the internet without the consent of the users.

The company’s method of image gathering represents a violation of the ‘Personal Information Protection and Electronic Documents Act (PIPEDA).’ In addition, the association of RCMP with Clearview puts the police in contravention of S.4 of the Privacy Act, said the OPC.

Read More: Bring Your Picture To Life With Deep Nostalgia

Dr. Brenda McPhail, Director of the Privacy, Technology and Surveillance Program at the Canadian Civil Liberties Association (CCLA), said, “Police aren’t allowed to use surveillance tools, if the information that feeds, creates or forms the basis for that tool was unlawfully acquired.” He also added, “It would mean that those bodies, that are duty bound to enforce our laws, would have a loophole that would allow them to break them, sort of at a whim.”

According to the report, RCMP continuously misled the OPC, firstly by denying usage of the technology. Later, RCMP said that the use was restricted to locate and identify children who have been victims of cyberbullying and online sexual abuse.

They later, in a statement to OPC, said that only 78 searches were made, but after investigating Clearview’s records, it was found that The RCMP searched more than 520 times. RCMP argued that the difference in data is because of multiple searches of the same individual, but the truth behind this statement is ambiguous. After investigation, it was found that only 6% of the searches were concerned with identifying victims of online sexual abuse.