Startup UVeye, which develops automated vehicle inspection systems, announced today that it has closed a $100 million fundraising round headed by Hanaco VC.

The company has now collected about $200 million in total, thanks to the Series D round, which also included current investors W.R. Berkley Corporation, GM Ventures, CarMax, F.I.T. Ventures L.P., and numerous Israeli institutional investors.

A drive-through system developed by UVeye uses artificial intelligence and sensor fusion technologies to quickly identify mechanical and exterior defects below or on the sides of any car. Additionally, it may spot changes and foreign factors that might be problematic for the vehicle.

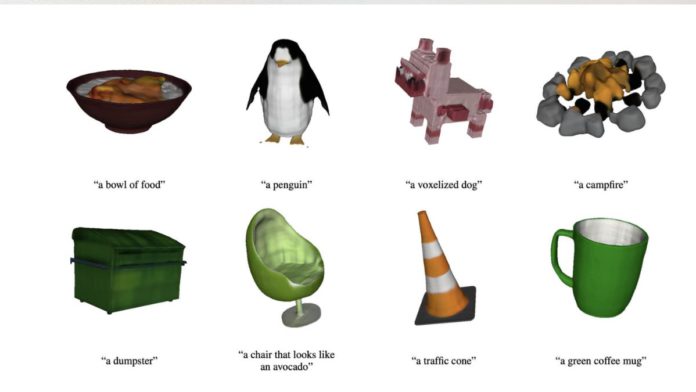

Read More: OpenAI Closes $300 Million Funding Round Between $27-$29 billion Valuation

According to the firm, as electronic and driverless vehicles get more complex, a system like this is required. The capacity to undertake low-cost and high-frequency predictive maintenance will become crucial for businesses that run fleets of these cars, it claims.

The co-founder and CEO of UVeye, Amir Hever, stated that the company’s objective is to standardize how the car industry identifies damage and mechanical problems on automobiles and to develop new quality standards. He said, “Our patent-protected technology delivers unmatched solutions for swiftly and precisely identifying vehicle problems to automakers, dealers, and fleet operators.”