DeepMind has introduced a framework to build AI agents that can perform human actions in video game worlds. With its paper titled “Improving Multimodal Interactive Agents with Reinforcement Learning from Human Feedback,” DeepMind is putting together early steps in building video game AIs that are familiar with human concepts and can interact with people on their own.

Mimicking human behavior is considerably challenging for artificial intelligence. It requires a grave understanding of natural language and situated intent. As per the majority of researchers, generating code involving all nuances of interactions is practically impossible. As an alternative to extensive coding, they are now focusing on modern machine learning to make the models learn from data.

DeepMind developed a research paradigm that enables agent behavior improvement via grounded and open-ended human interaction. It is a new paradigm, yet it can create AI agents that can listen, talk, search, ask questions, and navigate in real time.

Read More: Harvey Uses AI to Answer Legal Questions, Receives Funding from OpenAI

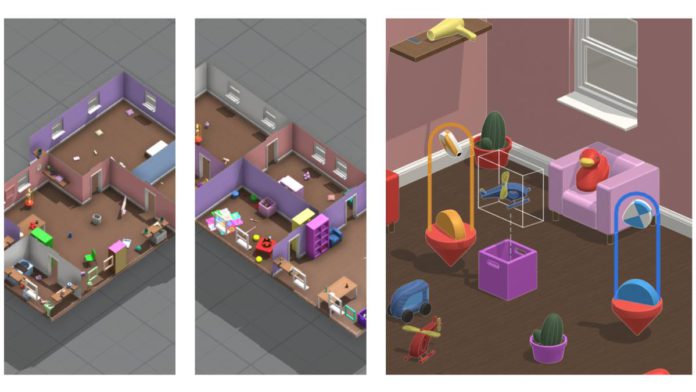

DeepMind created a virtual “playhouse” with recognizable objects and random configurations designed for navigation and search. The interface also includes a chat for communication without any constraints. The idea begins with people imbued with an unrefined set of behaviors interacting with others. The “prior behavior” enables humans to judge agents’ interactions.

This judgment is optimized using reinforcement learning to produce better agent behaviors. To further learn more goal-oriented behavior, the AI agent must pursue an object and master movements around it.

Ultimately, to measure the effectiveness of the agents in the game, a reward model is necessary. DeepMind researchers trained a reward model with human preferences and used it to place the agents into a simulator to make them go through a question-answer set. The reward model scored their behavior as the agents listened and responded in the environment.

The technique is still in its infancy, and researchers welcome all comments and feedback on the same.