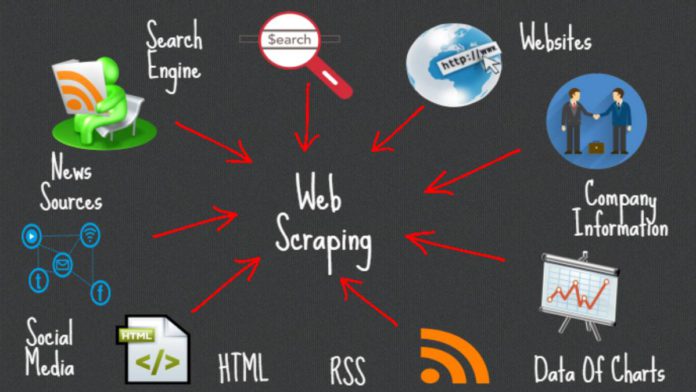

Web scraping is a mechanized technique to extract massive amounts of data from websites. The bulk of this data is semi-structured in HTML format and is later transformed into structured data, stored in a database or spreadsheet so that it can be used in multiple applications. Although web scraping can be done manually, automated methods using specific web scraping tools are typically preferred since they can be less expensive and perform more quickly.

Web scraping, however, is typically not an easy operation. Initially, the scraping tool will be fed with one or more URLs. Then the scraper loads the entire HTML code for the requested page and extracts all the data or specifics. Some advanced web scraping tools can render the entire webpage, including CSS and Javascript elements. Finally, the web scraper will export all the acquired data in a more user-friendly format. Most online scrapers output data to Excel spreadsheets or CSV, but more complicated ones support other formats like JSON.

Because of digitization, websites have gathered massive amounts of data, and web scraping techniques have gained popularity. Web scraping tools differ in functionality and features since website data comes in various kinds and sizes. Some of the standard data scraping techniques that the best web scraping tools use, are:

- HTTP programming

- HTML parsing

- DOM parsing

- Semantic Annotation

- Computer Visions Web-page analysis

Best Web Scraping Tools you Should Try

Since every web crawler is different, choosing the right one can be challenging. This article describes what is a website scraper and compiles a list of some of the best web scraping tools.

- Scraper API

Web scraping is a complex procedure, but Scraper API, one of the best web scrapers, simplifies it by handling proxies, browsers, and CAPTCHAs. Scraper API has built multiple web scrapers and repeatedly went through setting procedures to build use-specific web scrapers. There are many web scrapers based on the data one needs to extract. Nevertheless, all APIs work similarly, and so does Scraper API. The user requests a particular source. This request is received by the scraper API, which connects to the target system using its specifics. It then extracts data from that system and gives it back to you for processing and storing for later use or immediate usage.

This webpage scraper offers a new Async Scraper endpoint that enables web scraping jobs at scale without any timeouts, making data scraping more resilient. The API offers seamless integration with NodeJS, NodeJS Puppeteer, and Cheerio.

You can avail of 5000 free API credits with their 7-day trial service post, which you can use for US$49/month. For more pricing details, you can visit the website.

- Smartproxy SERP Scraping API

Web scraping the Google Search results pages can be tedious as Google does not allow it. Moreover, scraping at a rate higher than eight keyword requests/hour risks your detection, and more than ten keyword requests will result in blocking. An excellent solution to this is offered by the SERP scraping API, the best web scraping tool from Smartproxy. Web scraper, data parser, and a sizable proxy network are all combined in the API.

This full-stack tool for web scraping allows users to send a single, successful API request to retrieve structured data from the most significant search engines. The search engine proxies from Smartproxy can be used for everything from monitoring prices to retrieving paid and organic data to examining keyword ranks and other SEO metrics in real-time.

Smartproxy scarper service offers multiple plans based on your requirements for proxy requests. There are four plans, Lite, Basic, Standard, and Solid. Additionally, Smartproxy offers enterprise-level plans for more complex results. For more details, you can check their pricing page.

- ParseHub

The previous generation of scraping tools was based on codes and hours of coding. To make web scraping tools more precise and save coding time, no-code development platforms like ParseHub have come into the picture.

With this web scraping tool, users can create their data extraction workflows without programming knowledge. ParseHub can manage all source code element selection and neighbor element prediction independently.

Data scrapers like ParseHub offers a FREE version without any credit card requirement using which users can extract 200 pages per run within 40 minutes. ParseHub offers Standard, Professional, and Enterprise-level plans to offer better services and more pages. Check their pricing page for more details.

- Web Scraping using Beautiful Soup

The above-mentioned data scraping techniques are third-party scraping tools. You can also scrape data manually by utilizing open-sourced libraries and codes. Beautiful Soup is a Python library that extracts data from HTML, XML, and other similar formats. Simply, it helps users to pull specific content from a webpage by removing the HTML markup and saving information. The library can be used to isolate titles, links, and texts from HTML tags and alter HTML within the document.

To scrap data, the user sends an HTTP request to the target URL. Once the access is granted, data has to be parsed using an HTML parser, like html5lib, that creates a nested data structure. Finally, the last step is to navigate and search the parse tree using Beautiful Soup.

It is a no-cost way to extract data from web pages. Install all third-party libraries, requests, html5lib, and bs4 using the pip command and follow the steps to scrap data.

- Octoparse

Octoparse is a cloud-based web data extraction tool that helps scrap data from various websites. Users can use it to scrape product comments, reviews, social media channels, and other unstructured data and save it in different formats, including HTML, Excel, and plain text. Octoparse is capable of running multiple extraction tasks simultaneously. These tasks can be scheduled in real-time or at regular intervals.

Octoparse offers two customized modes, the Wizard Mode and the Advanced Mode. The Wizard mode provides step-by-step instructions for scraping data, while the Advanced mode offers features for more complex web pages. Additionally, the IP rotation feature prevents XML/API blockages from suppliers.

Octoparse provides services that include email and online knowledge base help on a monthly subscription basis. The free plan has a cap of 10.000 records per export and a low number of concurrent crawlers and runs. For more information, refer to the pricing page.

Read More: Donald Trump Launches $99 Digital Trading Card NFTs Minted On Polygon.

- Helium Scraper

Most websites that display lists of information do so by querying a database and intuitively presenting the data. This procedure is reversed by a web scraper, which takes unstructured websites and converts them back into a database. Helium Scraper is a web scraper that focuses on the kind of data to be extracted and not on how to extract it.

It offers software for web scraping using multiple off-screen Chromium browsers, presents a simple interface, and integrates web scraping and API calling into a single project. The web scraper tool also supports JavaScript code and function generation to match, split or replace extracted text.

For new users to get started, there is a 10-day trial available. Later, with a one-time purchase, users can buy the software for a lifetime. For more information, refer to the pricing page.

- Apify

Apify is an automation, data extraction, and web scraping platform. With Apify, users can create an API with an integrated data center and residential proxies for extraction. For web pages like Instagram, Facebook, Twitter, and Google Maps, Apify Store offers ready-made scraping solutions. Developers can build customized scraping tools for others websites while Apify handles infrastructure and payment.

Apify offers shared IPs and seamless integration with Keboola, Transposit, Airbyte, Zapier, and other similar platforms. It supports programming languages like Selenium, Python, and PHP.

Apify provides 1000 no-cost API requests. For more requests, the plans start at US$49/month and come at a 20% discounted value with yearly payments. For more information, refer to the pricing page.

- Zenscape API

Despite numerous online scraping solutions, Zenscrape is one of the most reliable data scrapers. It meets your requirements and does web scraping on a big scale while resolving any problems. It is another online web scraper tool with no coding requirements. With Zenscape, users can extract data from any website having anti-scraping measures by its IP rotation, CAPTCHA solving, and other features.

Zenscape provides a user-friendly interface, JavaScript rendering and supports many front-end frameworks like JQuery, Vue, and React. Additionally, Zenscape does not limit the number of Queries Per Second, and every request is allotted to a unique IP address.

Zenscape offers a lifetime free plan for US$0, a Small plan for US$24,99, a Medium for US$79,99, and a Large for US$199,99. For more information, refer to the pricing page.

- Import.io

There are plenty of ways to scrape data and mine information from a website. One of the numerous services that intends to streamline the scraping process is Import.io. Import.io is an e-commerce platform that helps enterprises create more innovative analytics and offers web scraping assistance. It leverages a no-cost and convenient data scraping service, even for websites that employ JavaScript and display results over numerous pages.

Users can download, install, and launch Import.io for Windows, OS X, and Linux by going to the website. Then create an Import.io account, which can be done for free up to 250,000-page calls each day, or sign in with GitHub, Google, or LinkedIn accounts. Click here to learn more about the prices.

- Sequentum Content Grabber

Sequentum Content Grabber is yet another low-code web data extraction tool that automates the extraction process by adapting to recurrent data, code, and environment changes. The scraper tool is aimed at enterprises that wish to reduce their coding labor and time by creating stand-alone web crawling agents.

The end-to-end data extraction platform can be used in-house and outsourced for web data. For web data extraction, document management, and intelligent process automation, this tool for scraping offers total control (IPA). Users will be able to create scripts or debug the crawling process programming using C# or VB.NET. Almost any website’s content can be extracted and saved as structured data in the desired format.

The annual enterprise license starts at US$15,000. To scale their operations, some enterprises may require additional licenses, which can be added for additional costs. Refer to the main website for prices.