Building and deploying conventional machine learning (ML) models has become challenging due to the increasing volume and complexity of data. These models can sometimes perform inefficiently or generate inaccurate results. A suitable solution to overcome these limitations is quantum machine learning.

By utilizing quantum computing technology, quantum ML allows you to refine the functionality of classical ML algorithms, offering enhanced performance and prediction accuracy. Quantum ML is also valuable for critical tasks such as developing new materials, drug discovery, and natural language translation.

To build quantum ML models for your specific use cases, you must understand what quantum machine learning is, its advantages, and implementation challenges. Let’s get started!

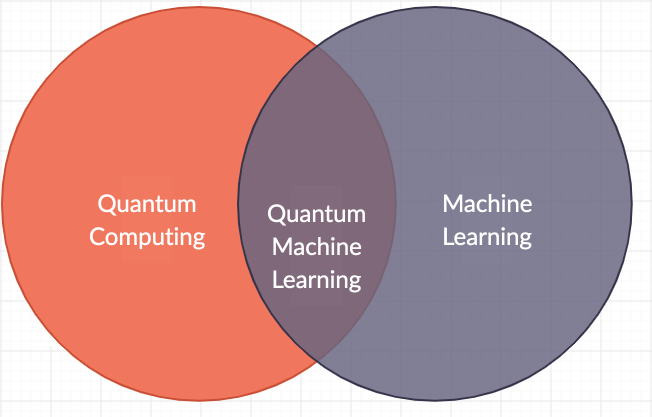

What Is Quantum Machine Learning?

Quantum machine learning (QML) is a technology that integrates quantum computing with machine learning to generate results that outperform conventional ML models. The field of quantum computing involves the use of quantum mechanics to help you solve complex problems quickly.

Quantum computing finds its use in devices like quantum computers to facilitate faster computational operations. Unlike classical computers that store data in binary bits, quantum computers use qubits, the quantum equivalent of binary bits. In binary form, data can exist either in 0 or 1 state, while as a qubit, data can exist in multiple states in addition to 0 and 1. This unique property gives quantum computers an exceptionally high storage capacity and processing power.

By combining the advanced capabilities of quantum computing with machine learning, you can build quantum ML models that produce highly accurate outcomes in minimal time.

Why There Is a Requirement for Quantum Machine Learning?

There are some challenges associated with classical machine learning models. Some of the reasons that make classical machine learning models inefficient include:

- As the dimensions of training data increase, classical ML models require more computational power to process such datasets.

- Despite parallel processing techniques and advancements in hardware technologies like GPUs and TPUs, classical ML systems have scalability limits. Due to these constraints, you cannot significantly enhance the performance of such ML models.

- Classical ML models cannot process quantum data directly, which is useful for solving complex scientific problems. Converting quantum data into a classical format can lead to data loss, reducing the accuracy of the models.

Quantum machine learning can help address these limitations. You can train quantum ML models directly on large volumes of quantum data without loss of information. These models can also be trained on high-dimensional datasets because of quantum mechanical phenomena like superposition and entanglement. Let’s learn about these mechanisms in detail in the next section.

Quantum Mechanical Processes That Help Improve Machine Learning Efficiency

Quantum computing relies on multiple processes that help overcome the limitations of classical machine learning. Let’s look into these processes in detail.

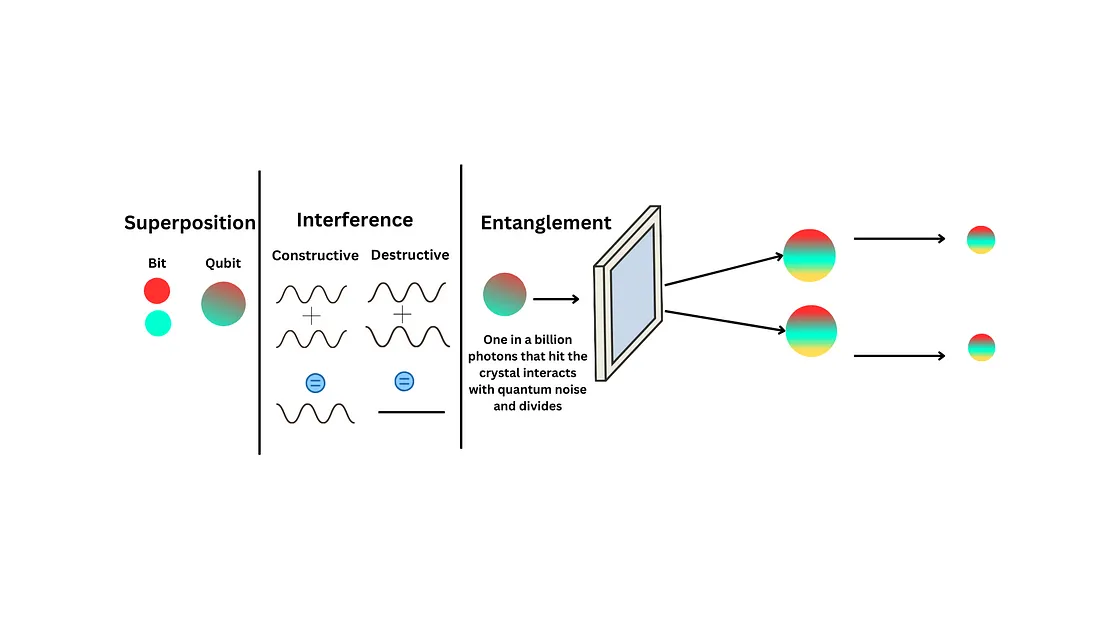

Superposition

Superposition is a principle of quantum mechanics where a quantum system can exist in multiple states simultaneously. This capability allows you to represent high-dimensional data compactly, reducing the use of computational resources.

With superposition, you can also execute several operations in quantum ML models at the same time. This reduces computation time for tasks such as pattern recognition and optimization.

Entanglement

Quantum entanglement is a phenomenon that takes place when the quantum states of two or more systems become correlated, even if they are separated spatially. In Quantum ML, entangled qubits can represent strongly interrelated data features, which helps ML models identify patterns and relationships more effectively.

You can utilize such entangled qubits while training ML models for image recognition and natural language processing tasks.

Interference

Interference occurs when quantum systems in a superposition state interact, leading to constructive or destructive effects.

To better understand this concept, let’s consider an example of classical interference. When you drop a stone in a pond, ripples or waves are created. At certain points, two or more waves superpose to form crests or high-amplitude waves, which is called constructive interference. On the other hand, destructive interference arises when waves cancel each other out.

In quantum ML, you can utilize interference in Quantum Support Vector Machines (QSVM) to streamline pattern recognition and improve the accuracy of classification tasks. QSVM are supervised learning algorithms that help with classification and regression learning techniques.

Advantages of Quantum Machine Learning

After understanding the processes contributing to quantum ML’s efficiency, it is evident that this technology has numerous benefits. Here are a few advantages of using quantum ML:

Enhanced Speed of ML Models

Quantum computing helps significantly accelerate the performance of ML models through qubits and quantum mechanical processes. It simplifies handling large datasets with numerous features, facilitating their use for model training with minimum computational resources. As a result, quantum ML models are high-performing and resource-efficient.

Recognizing Complex Data Patterns

Some datasets, such as those related to financial analysis or image classification, are complex. Conventional ML models may find it difficult to identify patterns and trends in such datasets. However, quantum machine learning algorithms can help overcome this hurdle using the entanglement phenomenon. This offers superior predictive capabilities by recognizing intricate relationships within the datasets.

Enhanced Reinforcement Learning

Reinforcement learning is a machine learning technique that allows models to make decisions based on trial and error methods. These models refine themselves continuously depending on the feedback they receive while training. As quantum ML models are capable of advanced pattern recognition, they accelerate the learning process, enhancing reinforcement learning.

Challenges of Deploying Quantum ML Models

While quantum ML offers some remarkable advantages over classical ML models, it also has challenges that you should be aware of before implementing quantum ML. Some of these challenges include:

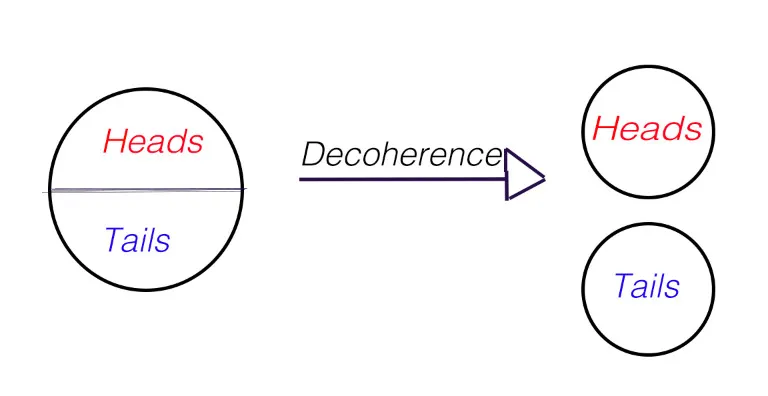

Decoherence

Decoherence is the phenomenon in which a quantum system loses its quantum properties and starts following principles of classical mechanics. Qubits are sensitive and can lose their coherence when disrupted by even slight noise or disturbances. Such diminishment of coherence can lead to information loss and inaccuracies in the model outcomes.

Ineffectiveness of QNN Models

Quantum neural network (QNN) models mimic the functionality of human neural systems. However, QNN models can be affected by the phenomenon of barren plateaus. It occurs when ML algorithms cannot produce the desired output due to the loss of gradients in the cost function related to quantum parameters. This issue can significantly hinder the training process, reducing the efficiency of QNN models.

Infrastructural Inaccessibility

The infrastructural requirements of quantum ML involve access to costly and high-maintenance quantum computers. Some cloud-based quantum computing platforms exist, but they are inadequate for robust training of complex ML models. You also need to invest in tools to prepare datasets used to train the quantum models, which further increases the implementation costs.

Lack of Technical Expertise

Quantum technology and machine learning processes are still in developmental stages. This makes it difficult to find skilled professionals who are experts in both these disciplines. To hire suitable candidates, you must offer substantial salaries, impacting the budget of other organizational operations.

Use Cases of Quantum Machine Learning

According to a report by Grand View Research, the quantum AI market size reached 256 million USD in 2023 and is expected to grow at a CAGR of 34.4% from 2024 to 2030. This shows that there will be extensive growth in the adoption of quantum AI and machine learning-based solutions.

Some of the sectors that can leverage quantum ML are:

Finance

Since quantum ML models produce highly accurate predictions, you can use them to analyze financial market data and optimize portfolio management. By leveraging quantum ML models, you can also identify suspicious monetary transactions to detect and prevent fraud.

Healthcare

You can utilize quantum ML models to process large datasets, such as records of chemical compounds, and analyze molecular interactions for faster drug discovery. Quantum ML models also assist in the recognition of patterns from genomic datasets to predict genetics-related diseases.

Marketing

Quantum ML models allow you to provide highly personalized recommendations to customers by assessing their behavior and purchase history. You can also use this information to create targeted advertising campaigns, resulting in improved customer engagement and enhanced ROI.

Conclusion

Quantum ML is a rapidly developing domain that has the potential to revolutionize the existing functionalities of machine learning and artificial intelligence. This article provides a comprehensive explanation of quantum machine learning and its advantages. The notable benefits include improvement in models’ performance speed and accuracy.

However, quantum ML models also present some limitations, such as decoherence and infrastructural complexities. Knowing these drawbacks makes you aware of potential deployment challenges. You can use this information to develop an effective quantum machine learning model that can make highly precise predictions.

FAQs

What is a qubit?

A qubit is a quantum mechanical counterpart of the classical binary bit. It is the basic unit of information in quantum computers. A qubit can exist in a state of 0, 1, or any superposed state between 0 and 1. This enables qubits to store more data than conventional binary bits.

What is quantum AI?

Quantum AI is a technology that utilizes artificial intelligence and quantum computing to perform human intelligence tasks. One of the most important components of quantum AI is the quantum neural network (QNN), a quantum machine learning algorithm. You can use quantum AI in fields such as finance and physical science research to recognize common patterns and solve advanced problems.