The world is not new to the notion of being watched by facial recognition systems. While facial recognition has already revolutionized the field of biometrics verification and was shot to widespread usage amid covid restrictions, the biometrics field is on the verge of witnessing new trends. For instance, gait recognition using motion sensors. Like a human fingerprint, an individual’s gait is unique too. It is believed that gait recognition will soon supplement the existing biometric technologies like facial recognition, voice recognition, iris recognition and fingerprint verification, for enhanced security.

Much like in the movie Mission Impossible: Rogue Nation, where the protagonist’s sidekick Benji has to walk across a room where gait analysis is performed on him, before retrieving a secret Syndicate file. In the movie the gait analysis system observes how an agent talks, walks and even their facial tics – any mismatch will result in getting tased. In the real world, gait recognition is touted to work similarly.

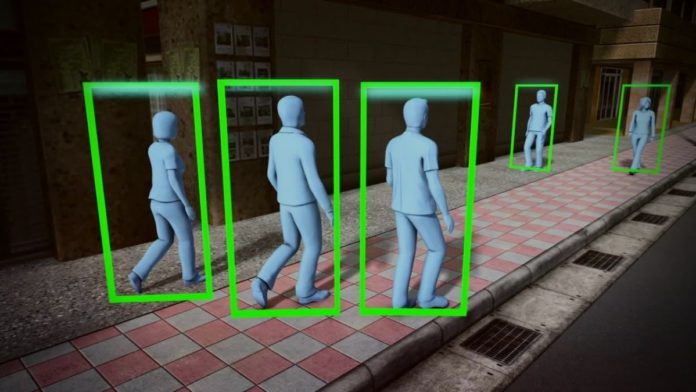

Machine Learning technologies formed the foundation for developing gait recognition tools. Even if a person’s face is hidden, turned away from the camera, or hidden behind a mask, ML-based computer vision systems can recognize them from a video.

The software looks at the person’s silhouette, height, pace, and walking patterns and matches them to a database. Some algorithms, for example, are intended to interpret visual information, while others employ sensor data. Irrespective of the nature of data, the whole process involves: capturing data, Silhouette segmentation, contour detection and feature extraction with classification. In public locations, this technology is more convenient than retinal scanners or fingerprints since it is less intrusive. Further, as mentioned earlier, gait recognition is unlikely to be beaten since no two people’s gaits are the same.

Scientists are now striving to enhance recognition systems using the data and models gathered. Because each footfall is distinct, the identification algorithms are always confronted with new data. The algorithm will assess future data better if it detects more gait variations.

Because no algorithmic method can be perfect and there is always room for development, new capture methods and algorithms are always being tested to address the shortcomings of present gait recognition systems. While video camera data is already used for gait analysis, researchers are also working on gait recognition based on-body sensors, sensors on mobile phones or smart wearable devices, radar input, and so on.

Biometrics for gait recognition is still in its infancy, however, it has already found an interesting application, i.e., detecting human emotions on how they walk. Gait recognition combines spatial-temporal characteristics like step length, step width, walking speed, and cycle time with kinematic parameters like the ankle, knee, and hip joint rotation, ankle, knee, hip, and thigh mean joint angles, and trunk and foot angles. The relationship between step length and a person’s height is also taken into account. All these factors change depending on the mood of an individual. A person who just learned that they won a lottery will have a different stride than someone bereft or pacing nervously.

Read More: Getty Images Announces First Enhanced Model Release in a bid to protect Biometric Data

Analyzing these can help identify nonverbal behavioral cues and help with our social skills. Researchers at the University of Maryland created an algorithm called ProxEmo a few years ago, which allowed a small wheeled robot (Clearpath’s Jackal) to assess your gait in real-time and anticipate how you might be feeling. The robot could choose its course to offer you more or less space based on your detected emotion. Though this could appear to be a minor milestone in the context of robot-human relations, but scientists believe in future machines will be clever enough to interpret an unhappy person’s walk and attempt to comfort them.

The team presented a multi-view 16 joint skeleton graph convolution-based model for classifying emotions that works with a common camera installed on the Jackal robot. The emotion identification system was linked into a mapless navigation scheme, and deep learning techniques were used to train the system to correlate various skeleton gaits with the feelings that human volunteers identified with those walking humans. On the Emotion-Gait benchmark dataset, it earned a mean average emotion prediction precision of 82.47%. You can check the dataset here.

Last year, researchers from the University of Plymouth presented an experimental biometrics smartphone security system that used gait authentication. The solution uses smartphones’ motion sensors to record data on users’ stride patterns, with preliminary results indicating that the biometric system is between 85 and 90% accurate in detecting an individual’s gait, based on 44 participants.

So far gait recognition system promises new avenues of biometrical identifications; however, the success depends on whether it violates public privacy.