Every major AI model you use today is built on the transformer architecture. And every transformer carries the same structural cost: as context length grows, compute scales quadratically. Double your input, quadruple your cost. This is not a product limitation. It is a fundamental property of how transformers work, and the entire industry has spent years building workarounds rather than fixing the underlying problem.

On May 5, 2026, a startup called Subquadratic claimed to have fixed it.

The company launched SubQ 1M-Preview, the first large language model built on a fully sub-quadratic attention architecture. The Subquadratic SubQ linear scaling LLM runs on a mechanism called SSA, Subquadratic Sparse Attention, and the core claim is that compute now scales linearly with context length. At 1 million tokens, SubQ achieves a 52.2x prefill speedup over standard dense attention and a 62.5x reduction in attention FLOPs compared to standard quadratic attention.

The company raised $29 million in seed funding from investors including Javier Villamizar of Vision Fund, Justin Mateen (co-founder of Tinder), and early backers of Anthropic, OpenAI, Stripe, and Brex. The team of 11 PhD researchers comes from Meta, Google, Oxford, Cambridge, ByteDance, Adobe, and Microsoft.

Also Read: What the $26.6B Nasdaq Listing Means for AI Chips

Why the transformer’s quadratic scaling problem has resisted a fix

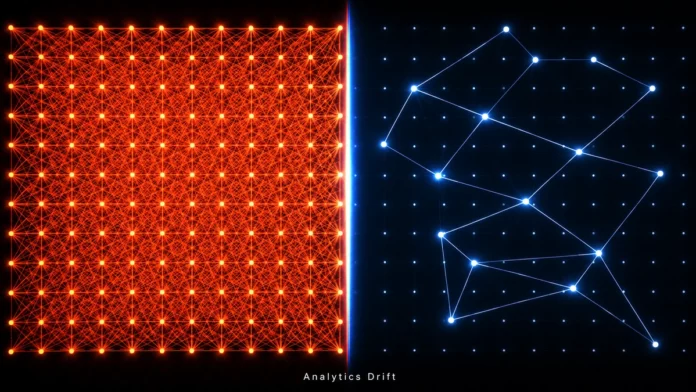

In a standard transformer, every token in a prompt compares itself against every other token to determine what to attend to. If you have 1,000 tokens, that is 1,000,000 comparisons. At 10,000 tokens, it is 100,000,000. At 1 million tokens, the compute becomes prohibitive. Cost does not grow with the input. It explodes with it.

This is why most AI workflows are built around workarounds. RAG systems retrieve small chunks of relevant text instead of feeding entire documents. Agentic pipelines break large tasks into smaller model calls, compressing and losing context at every boundary. Developers spend more engineering time on scaffolding, chunking strategies, retrieval pipelines, prompt compression, than on the actual problem they need to solve.

The research community has attempted sub-quadratic attention mechanisms for years. Every prior approach made a tradeoff that the industry considered unacceptable. Fixed-pattern sparse attention reduces compute but routes attention based on position, not content. When the relevant information sits outside the fixed pattern, the model cannot see it. State space models and recurrent architectures achieve linear scaling but compress context into a fixed-capacity state, degrading exact retrieval as sequence length grows. Hybrid architectures retain dense attention layers for retrieval and efficient layers for cost, but the dense layers remain load-bearing. As context grows, their quadratic cost still dominates.

The open problem was precise: build a mechanism that is efficient, content-dependent, and capable of retrieving from arbitrary positions across long context. That is what SSA Subquadratic Sparse Attention is designed to do.

How SSA works

SSA changes how attention work is allocated. Instead of comparing every token against every other token, it uses content-dependent selection. For each query, the model identifies which positions in the sequence actually carry signal and computes attention exactly over those positions, skipping the rest.

Dense attention assumes every pair might matter and evaluates all of them. In practice, the vast majority of pairwise interactions carry negligible signal, but the model still pays the full quadratic cost to compute them. SSA removes that assumption. It does not approximate attention. It restricts attention to the positions that actually matter, based on meaning rather than position.

This gives SSA three properties that matter together: linear scaling in compute and memory, content-dependent routing regardless of where relevant information sits in the sequence, and exact retrieval from arbitrary positions rather than compressed or blurred context.

The speedup compounds as context grows. Measured against FlashAttention on B200s, SSA achieves a 7.2x input processing speedup at 128K tokens, 13.2x at 256K, 23.0x at 512K, and 52.2x at 1 million tokens. The mechanism becomes more advantageous exactly where long-context workloads become most valuable.

The benchmarks

The Subquadratic SubQ linear scaling LLM published third-party verified results across three benchmarks.

On RULER at 128K tokens, a standard long-context reasoning benchmark covering multi-hop retrieval, aggregation, and variable tracking, SubQ scores 95.0%. Claude Opus 4.6 scores 94.8%. The models are effectively at parity.

On SWE-Bench Verified, which tests real-world software engineering on actual GitHub issues, SubQ scores 81.8%. Claude Opus 4.6 scores 80.8%. Gemini 3.1 Pro scores 80.6%. Claude Opus 4.7 leads the field at 87.6%, but SubQ holds its own against the previous generation of frontier models.

The most revealing result is MRCR v2, the hardest long-context retrieval benchmark. It tests the ability to locate and combine multiple non-adjacent pieces of evidence distributed across a large document, which is the closest proxy to real enterprise workloads. SubQ scores 65.9%. GPT-5.5 scores 74.0%. Claude Opus 4.6 leads at 78.3%. Claude Opus 4.7 and Gemini 3.1 Pro fall below SubQ at 32.2% and 26.3% respectively.

One figure worth tracking: Subquadratic’s research model scores 83 on MRCR v2, a 17-point gap above their production model. The company discloses this openly. A full technical report and model card are forthcoming.

The economics of linear scaling

Subquadratic prices SubQ at one-fifth the cost of leading frontier models. At the 12 million token context window model their research targets, attention compute drops nearly 1,000x compared to standard transformer models.

The implications for enterprise workloads are direct. Reasoning over entire codebases, full contract libraries, months of operational logs, and long-running agent sessions are currently cost-prohibitive or require elaborate scaffolding to manage. If the sub-quadratic attention mechanism AI performs as described at that scale, those workloads become economically viable without workarounds.

The company is offering access through two products. An OpenAI-compatible API for developers and enterprise teams, and SubQ Code, a coding agent that plugs directly into Claude Code, Codex, and Cursor. SubQ Code is designed to handle token-heavy context tasks at a claimed 25% lower cost and 10x faster codebase exploration.

What this means for the industry

The transformer quadratic scaling problem solved by a seed-stage startup carries an uncomfortable implication. Every major lab, OpenAI, Anthropic, Google DeepMind, has known about this ceiling for years. The fact that none of them shipped a production sub-quadratic model is either evidence that the problem is genuinely hard to solve without sacrificing accuracy, or evidence that their existing transformer investments create complicated incentives to replace the architecture.

A $29 million seed company claiming to have done what frontier labs have not demands scrutiny proportional to the claim. The full technical report is still pending. The gap between the research and production MRCR v2 scores is real and worth watching across future evaluations. Peer review has not yet arrived.

What is not in question is the significance of what they are claiming. If the Subquadratic SubQ linear scaling LLM scales as advertised, if 12 million reliable and efficient tokens become a production reality, the entire scaffolding layer of modern AI development becomes unnecessary overhead. The question is not whether the problem is worth solving. It is whether Subquadratic has actually solved it.