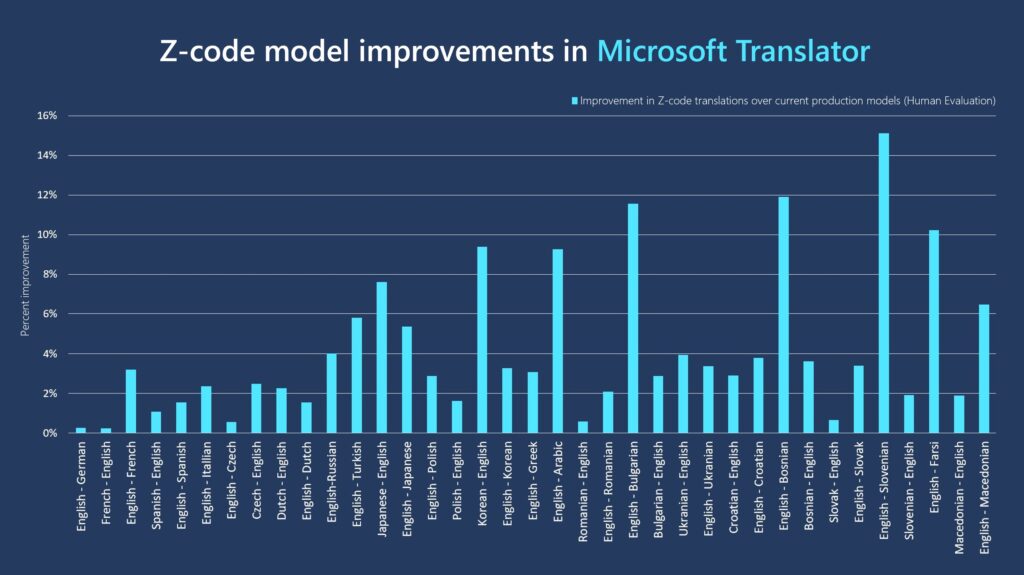

Microsoft has announced an improvement to its translation services that promises significantly enhanced translations across a range of language pairs, thanks to new machine learning algorithms based on Microsoft’s Project Z-Code. These improvements will increase the quality of machine translations and allow Translator, a Microsoft Azure Cognitive Service that is part of Azure AI, to offer more languages than the most common ones with limited training data. Z-Code is part of Microsoft’s XYZ-Code initiative, which aims to combine text, vision, and audio models across various languages to produce more critical and practical AI systems. The update employs a “spare Mixture of Experts” (MoE) technique, which has resulted in new models scoring between 3% and 15% higher than the company’s earlier models in blind evaluations.

Last October, Microsoft updated Translator with Z-code upgrades, adding support for 12 more languages, including Georgian, Tibetan, and Uyghur. Now the recently released new version of Z-code, called Z-code Mixture of Experts, can better grasp “low-resource” linguistic subtleties. According to Microsoft, Z-code uses transfer learning, an AI approach that applies knowledge from one task to another, similar task, to enhance translation for the estimated 1,500 “low-resource” languages worldwide.

Microsoft’s model, like all others, learned from examples in enormous datasets gathered from both public and private archives (e.g., ebooks, websites such as Wikipedia and hand-translated documents). Low-resource languages are those having less than one million example sentences, which makes creating models more difficult as AI models perform better when given more instances.

The new models learn to translate between various languages simultaneously using the Mixture of Experts. Its fundamental function is to split tasks into several subtasks, which are then delegated to smaller, more specialized models known as “experts.” In this scenario, the model uses more parameters while dynamically picking which parameters to employ for a particular input and then deciding which task to delegate to which expert based on its own predictions. This allows the model to specialize a subset of the parameters (experts) during training. The model then employs the appropriate experts for the job during runtime, which is more computationally efficient than using all of the model’s parameters. To put it another way, it’s a model that contains multiple more specialized models.

The new system can immediately translate between ten languages, eliminating the need for multiple systems. Translator formerly required 20 different models for each of the ten languages: one for English to French, French to English, English to Macedonian, Macedonian to English, and so on. Besides, Microsoft has lately begun to use Z-Code models to boost additional AI functions, such as entity identification, text summarization, custom text categorization, and key extraction.

MoEs were initially proposed in the 1990s and subsequent research publications from organizations such as Google detail experiments with MoE language models with trillions of parameters. On the other hand, Microsoft asserts that Z-code MoE is the first MoE language model to be used in production.

Read More: Baidu launches AI platform for Speech to Hand Sign Translation

For the first time, Microsoft researchers worked collaboratively with NVIDIA to deploy Z-code Mixture of Experts models in production and NVIDIA GPUs. NVIDIA created special CUDA kernels to implement MoE layers on a single V100 GPU efficiently and used the CUTLASS and FasterTransformer libraries. They were able to deploy these models utilizing a more efficient runtime to optimize these new types of models using the NVIDIA Triton Inference Server. Compared to standard GPU (PyTorch) runtimes, the new runtime achieved up to a 27x speedup.

The Z-code team also collaborated with Microsoft DeepSpeed researchers to discover how to train a large Mixture of Experts models, such as Z-code, as well as smaller Z-code models for production settings.

Microsoft used human assessment to compare the new MoE’s quality enhancements to the existing production system, and found that Z-code-MoE systems beat individual bilingual systems, with average increases of 4%. For example, the models upgraded English to French translations by 3.2%, English to Turkish translations by 5.8%, Japanese to English translations by 7.6%, English to Arabic translations by 9.3%, and English to Slovenian translations by 15%.

The Microsoft Z-code-based MoE translation model is now available by invitation to customers to use Translator, for document translation. Document Translation is a feature that allows you to translate whole documents, or large groups of documents, into a number of file formats while maintaining their original formatting. It’s also worth mentioning that Microsoft Translator is the first machine translation company to provide consumers with live Z-code Mixture of Experts models.