Since the automakers have embarked on the mission to bring autonomous vehicles to the roads, there has been a rise in a series of claims, incidents, and investigations that outright state how unsafe these vehicles are. Most recently, the Full Self-Driving (FSD) Beta software from Tesla reportedly failed to recognize a stationary, kid-sized mannequin at an average speed of 25mph, according to test track data from the Dawn Project. Now, imagine the blow to this industry, when the latest study discovered that one could fool autonomous vehicles by using expertly timed lasers.

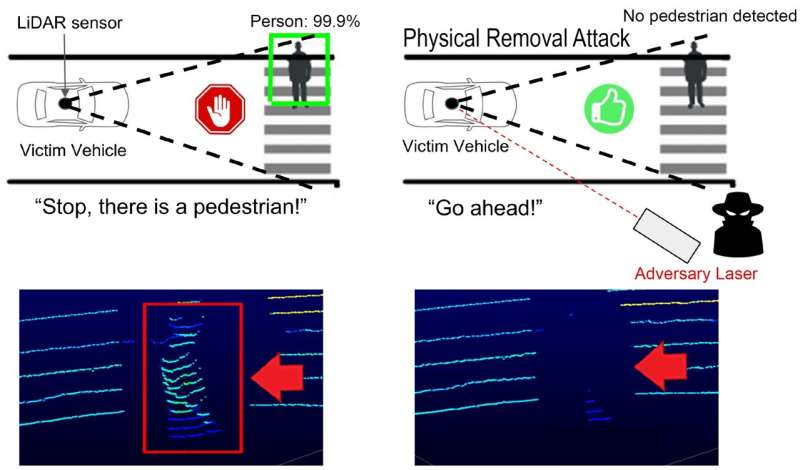

Researchers from the United States and Japan have shown that a laser strike might be used to impair autonomous vehicles and remove people from their field of vision, putting those in its path at risk. According to the research, perfectly timed lasers directed at an approaching LIDAR (Light Detection and Ranging) system could generate a blind area in front of the vehicle large enough to fully obscure moving pedestrians and other obstructions. The cars’ false perception of safety on the road due to the erased data put anything that could be in the attack’s blind zone in danger. The security flaw was discovered by researchers from the University of Florida, the University of Michigan, and the University of Electro-Communications in Japan.

LIDAR is a spinning version of radar used by autonomous or self-driving vehicles to measure the distances between themselves and the objects in their route by first reflecting laser light. LIDAR essentially aids the vehicle in detecting its surroundings. The researchers utilized a laser to simulate the LIDAR reflections received by the sensor. Sara Rampazzi, a UF professor of computer and information science and engineering who led the study, reported the sensor falsely detected the real barriers by discounting real reflections originating from the real obstacles in the presence of the laser pulses.

The researchers were able to remove data for both stationary objects and moving people using this approach. Under test conditions, an attack was deployed against an autonomous vehicle to prevent it from decelerating as it was intended to do when it encountered a pedestrian. The attacker was little more than 15 feet away when the laser strike was launched from the side of the road of an oncoming car. The study also warned about the possibility of real-world scenarios where the attack could follow a slow-moving vehicle using basic camera tracking equipment. In addition, the researchers used very basic camera tracking software for their investigations, which could have been impacted at a wider distance if they had used more advanced equipment.

However, with improved technology, it could be done at a greater distance. The technology needed is pretty simple, but in order to keep the laser pointed in the correct direction, it must be precisely synchronized to the LIDAR sensor and constantly monitored to track moving cars. One of the researchers, S. Hrushikesh Bhupathiraj, a UF doctoral student in Rampazzi’s lab and one of the lead authors of the study, revealed that although timing the laser beam toward the LIDAR sensor with some degree of accuracy is necessary for deceiving, the data required to synchronize this is publicly available from LIDAR manufacturers.

Read More: The Rise of China in the Autonomous Vehicle Industry

These experiments were carried out by the researchers in order to aid in the development of a more robust sensor system. Now that these attacks could be recognized, the manufacturers of these LIDAR systems will need to upgrade their software and switch to a different method of obstacle detection. Researchers are hopeful that future hardware design upgrades might also strengthen resistance against such attacks.

This is the first instance in which a LIDAR system has been hacked in any way to stop it from detecting obstructions. The research will be presented at the 2023 USENIX Security Symposium and are publicly available online.

Earlier in 2015, Dr. Jonathan Petit, a principal scientist specializing in connected vehicles and consultation services at Security Innovation and a former research fellow in the University of Cork’s Computer Security Group, discovered that a laser hack attack could paralyze driverless cars and trick them into taking evasive action while conducting research into the cyber susceptibilities of autonomous vehicles. He explained to the Institute of Electrical and Electronics Engineers Spectrum (IEEE) that the assault is quick and easy to execute using readily available tools, such as a Raspberry Pi or Arduino computer that can successfully impersonate the automobile at a distance of up to 100 meters.

The ability of autonomous cars to accurately identify and understand nearby obstructions in real-time is crucial to their long-term success. These in-road obstacles might include people, traffic cones, and other vehicles. To achieve precise and reliable detection, the majority of high-level autonomous vehicles use a variety of perception sources, such as LIDAR, multi-sensor fusion, and cameras. Theoretically, the obstacle detection systems in such vehicles always perform at their highest level, unlike distracted or intoxicated drivers. As a result, they are expected to minimize accidents and the high mortality toll on our roadways. However, if a basic laser attack may disable or confuse them, it is time to reconsider how to address these obstacles before determining that autonomous driving technology is suitable for usage on public roadways. Especially when such hacks can stunt the growth of the autonomous vehicle industry!