On Monday, November 14, Intel unveiled new software that is reportedly capable of instantly recognizing deepfake videos. The company claims that their “FakeCatcher” real-time deep fake detection is the first of its kind in the world, with a 96% accuracy rate and a millisecond response time.

Like Demir, an Intel researcher, and Umur Ciftci from the State University of New York at Binghamton created FakeCatcher, which utilizes Intel hardware and software, runs on a server, and communicates via a web-based platform. The software utilizes specialized tools, such as the OpenVINO open-source toolkit for deep learning model optimization and OpenCV for processing real-time photos and videos, to run AI models for face and landmark detection. The developer teams also provided a comprehensive software stack for Intel’s Xeon Scalable CPUs using the Open Visual Cloud platform. The FakeCatcher software can run up to 72 different scanning streams simultaneously on 3rd Gen Xeon Scalable processors.

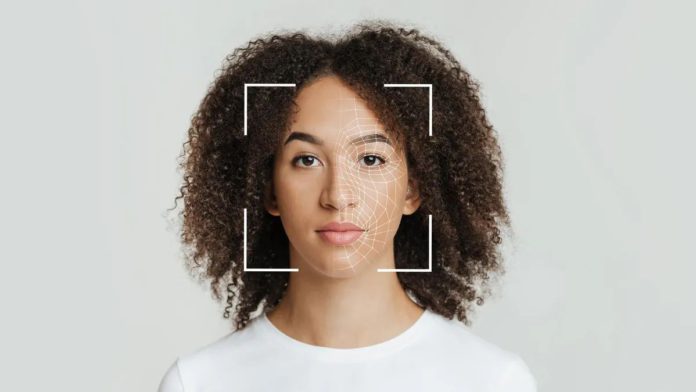

According to Intel, while the majority of deep learning-based detectors check for signs of inauthenticity in raw data, FakeCatcher adopts a different strategy and searches for genuine biological cues in actual videos, as they are neither spatially nor temporally preserved in fake content. Based on photoplethysmography (PPG), it evaluates what makes us human and examines minute blood flow in video pixels. According to Intel, the color of our veins changes when our hearts pump blood. These blood flow data are gathered from various parts of the face, and algorithms turn them into spatiotemporal maps. Then, using deep learning, FakeCatcher can instantaneously determine if a video is real or fake.

When evaluated against different datasets, FakeCatcher showed 96%, 94.65%, 91.50%, and 91.07% accuracies on Face Forensics, Face Forensics++, CelebDF, and on new Deep Fakes Dataset, respectively.

There are a number of possible applications for FakeCatcher, says Intel, including preventing users from posting malicious deepfake videos to social media and assisting news organizations in avoiding airing misleading content.

As deepfake threats proliferate, deepfake detection has become more crucial. These include compositional deepfakes, where malicious actors produce several deepfakes to assemble a “synthetic history,” and interactive deepfakes, which give the impression that you are speaking to a real person. The FBI reported to its Internet Crime Complaint Center this summer that it has received more complaints about persons using deepfakes to apply for remote employment, with an emphasis on voice spoofing. Some even pretend to be job applicants in order to acquire private corporate data. Additionally, deepfakes have been exploited to make provocative statements by posing as well-known political personalities.