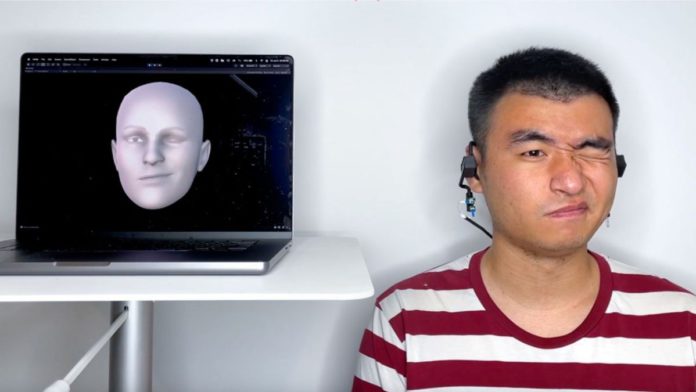

In order to improve privacy, Cornell researchers have created a wearable earphone gadget called “earable” that uses sonar technology to bounce sound off the cheeks and turn the echoes into an avatar of a person’s actual moving face. The device, called EarIO, was created by a team under the supervision of information science professor François Guimbretière and assistant professor Cheng Zhang. It is compatible with commercially available headsets for hands-free, cordless video conferencing and streams face expressions in real time to smartphones.

Zhang, who is the principal of the Smart Computer Interfaces for Future Interactions (SciFi) Lab, affirms that sonar has the edge over camera-based wearable sensors. Devices that track facial movements using a camera are typically large, heavy, and energy-hungry– factors that make wearables a much less alluring option. Additionally, he stated that a lot of private information is collected through camera wearables, as the device would need to connect to a Wi-Fi network and transport data back and forth to the cloud, possibly leaving it accessible to hackers. In comparison, adopting acoustic technology for facial tracking can provide more privacy, affordability, comfort, and battery life. Moreover, using auditory signals requires less energy than recording pictures; the EarIO consumes only a quarter of the energy of a similar camera-based device that the SciFi lab previously created.

The team explains the working of EarIO earable in the Proceedings of the Association for Computing Machinery on Interactive, Mobile, Wearable, and Ubiquitous Technologies. The paper is titled “EarIO: A Low-power Acoustic Sensing Earable for Continuously Tracking Detailed Facial Movements.”

The EarIO earable emits sonar pulses like a ship. The audio is emitted through speakers on each side of the earphone, and it operates by reflecting sound off the wearer’s cheeks. The echoes are picked up by a microphone, and they alter when the user talks and moves their face. The skin stretches and moves as users speak, smile, or lift their eyebrows, altering the echo profiles. The researchers leverage deep learning to analyze the data continuously and translate the changing echo profiles into complete facial expressions.

Read More: Cornell University Finds a Method to Introduce Malware in Neural Network

The accuracy of the EarIO earable’s face-impersonation ability was checked by the researchers using a smartphone camera by testing it on 16 respondents. In preliminary testing, the researchers discovered that the earable functions while users are seated and moving around, and that external noises such as wind, ambient road noise, and background talk have no effect on acoustic signaling. However, there could be inherent problems due to the high sensitivity of the sensing method. Co-author and Ph.D. student in information science Ruidong Zhang commented, EarIO’s performance is excellent because it can monitor very small motions, but it’s also negative because when anything changes in the surroundings or when your head moves slightly, the team captured those unwanted data too. The researchers want to prevent similar disturbances in future versions.

On the flip side, EarIO earable has some limitations. For instance, its battery life is limited to three hours. Therefore processing must be transferred to a smartphone, and the echo-translating AI system needs 32 minutes of face data to train before it can recognize expressions for the first time. However, the researchers argue that it’s a lot more streamlined experience than the recorders often used in animation for films, television, and video games.