Amazon has released a new testing tool for Alexa developers who want to increase the number of people who use their voice apps. The Alexa Skill A/B testing tool allows developers to set up A/B tests to learn how to get more customers to spend more time and money with their app and increase the number of distinct sessions when users restart the software.

In general, A/B testing is a type of experiment in which users are given two or more versions of feedback at random, and statistical analysis is performed to see which one works better for a certain conversion objective. A/B testing is extensively used in various sectors, including retail, marketing, and SaaS. A/B testing may be set up on a number of channels and mediums, including Facebook advertisements, search results, email newsletter processes, email subject line text, marketing campaigns, sales scripts, and so on. You design renditions, choose a metric and see which one has the highest conversion rate. Commonly, two alternatives are presented to an audience, with each receiving one of them, and the audience’s reaction helps determine which one should be used universally.

Voice apps employ this idea to evaluate the best key phrases to react to and the best responses to encourage repeat usage by decreasing friction in navigating the app. Setting up these tests may be time-consuming and inconvenient, which is why Alexa’s new A/B testing aims to make it easier. The setup takes only a few hours and can be evaluated over several weeks.

Amazon highlighted UK-based voice design studio, Vocala as an example of a customer that implemented A/B testing to discover that a certain prompt was 15% more successful in driving paid conversions. The test had taken the company less than two hours to finish.

“We analyzed the results of our experiment through a panel. After a few weeks, we could clearly see that the longer prompt was 15% more effective at generating paid conversions,” said James Holland, lead voice developer at Vocala.

According to a post on the Alexa developer blog, users may use the Alexa A/B testing to run experiments that measure customer engagement, retention, churn, and monetization. Developers can use this to examine a variety of products. This includes, customer perceived friction rate, In-Skill (ISP) purchase offers, ISP sales, ISP accepts, ISP offer accept rate, Skill next day retention, Skill dialogs, and Skill active days. The new features follow up on Amazon’s revelation last summer about Alexa Skill A/B Testing, which allowed users to try out new or updated Alexa skills as part of the Alexa Skill Design Guide, which was also announced at the same event.

Read More: Key Announcements From Amazon re:Invent 2021

Last year, during its third annual Alexa Live event, Amazon introduced Alexa Skill Components to help developers build skills quicker by inserting basic Skill code into existing speech models and code libraries. It also announced that the Alexa Skill Design Guide, which codifies lessons gained from Amazon’s developers and the larger skill-building community, has been improved.

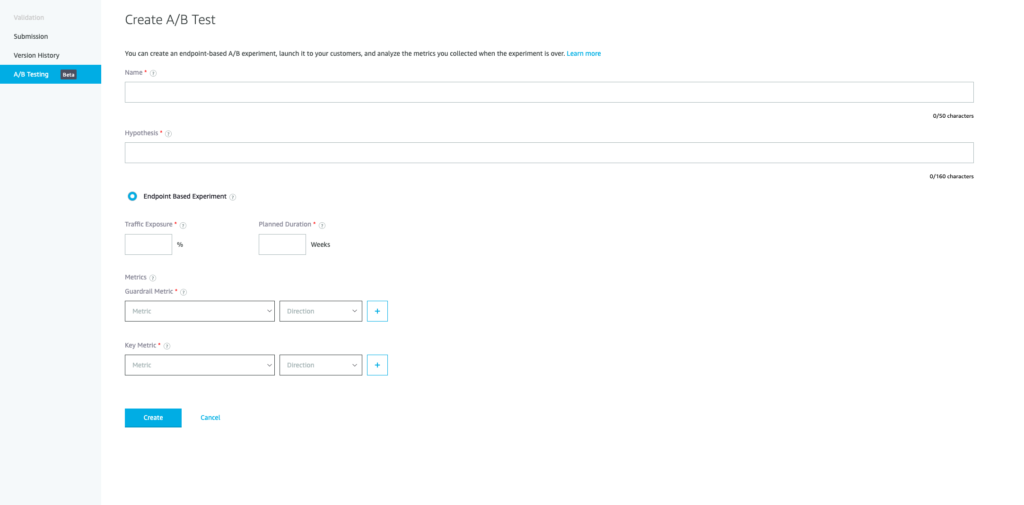

To get started with the Alexa A/B testing, go to the Alexa Developer Console and choose the skill for which you wish to perform an experiment, then click “Certification.” Look for the “A/B Testing” section and select “Create” from the drop-down menu. In the Experiment Analytics area, you can see experiment-related statistics for both the live skill version (control) and the certified skill version (treatment).